快速爬取网页

#-*- coding:utf-8 -*-

import urllib.request

file = urililb.request.urlopen('http://www.baidu.com')

data = file.read() #读取全部

dataline = file.readline() #读取一行内容

fhandle = open('./1.html','wb') #将爬取的网页保存本地

fhandle.write(data)

fhandle.close

浏览器的模拟(视频的代码):

应用场景:有些网页为了防止别人恶意采集其信息所以进行了一些反爬虫的设置,而我们又想进行爬取。

解决方法:设置一些Headers信息(User-Agent),模拟成浏览器去访问这些网站。

#-*- coding: utf-8 -*-

import urllib.request

url = 'http://www.baidu.com'

#我们要伪装的浏览器user-agent开头

user_agent = 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.96 Safari/537.36;'

#创建一个字典,使请求的headers中的’User-Agent‘:对应我们user_agent字符串

headers = {'user-Agent':user_agent}

#创建一个请求,需要将请求中的headers变量换成我们刚才创建好的headers

req = urllib.request.Request(url,headers = headers)

#请求服务器

response = urllib.request.urlopen(req)

#得到回应内容

the_page = response.read()

print(the_page)

论坛的代码(浏览器的模拟):

#-*- coding: utf-8 -*-

import urllib.request

import urllib.parse

url = 'http://www.baidu.com'

header = {

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.96 Safari/537.36'}

request = urllib.request.Request(url, headers=header)

reponse = urllib.request.urlopen(request).read()

fhandle = open("./baidu.html", "wb")

fhandle.write(reponse)

fhandle.close()

百度小爬虫:

#-*- coding: utf-8 -*-

def tieba_spider(url,begin_page,end_page):

for i in range(begin_page,end_page + 1):

pn = 50 * (i - 1)

my_url = url + str(pn)

print('将要请求的地址:')

print(my_url)

if __name__ == '__main__': #main函数

url = input('请输入url地址:')

print(url)

begin_page = int(input('请输入起始页码:'))

end_page = int(input('请输入终止页码:'))

tieba_spider(url,begin_page,end_page)

发送url请求:

#-*- coding: utf-8 -*-

import urllib.request

def load_page(url):

user_agent = "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.96 Safari/537.36'"

headers = {'User-Agent':user_agent}

req = urllib.request.Request(url, headers = headers)

response = urllib.request.urlopen(req)

html = response.read()

return html

def tieba_spider(url,begin_page,end_page):

for i in range(begin_page,end_page+1):

pn = 50 * ( i - 1)

my_url = url + str(pn)

my_html = load_page(my_url)

print('============第 % d页==============' %(i))

print(my_html)

print('==================================')

if __name__ == '__main__':

url = input('请输入url地址:')

print(url)

begin_page = int(input('请输入起始页码:'))

end_page = int(input('请输入终止页码:'))

tieba_spider(url,begin_page,end_page)

出现问题:无法显示出入起始页码与终止页码

输入url网址之后,点击回车键,会直接弹出网址所处的网页。求大神指点!!!!

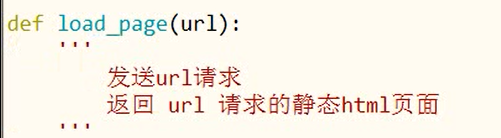

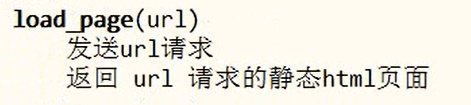

插入语:

在定义函数下直接加入注释,方便以后调用函数时了解此函数的用途。

在定义函数下直接加入注释,方便以后调用函数时了解此函数的用途。

保存文本:

#-*- coding: utf-8 -*-

import urllib.request

def load_page(url):

user_agent = "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.96 Safari/537.36'"

headers = {'User-Agent':user_agent}

req = urllib.request.Request(url, headers = headers)

response = urllib.request.urlopen(req)

html = response.read()

return html

def write_to_file (file_name,txt):

print("正在储存文件"+file_name)

# 1 打开文件

f = open(file_name,'w') #f代表file_name文件

# 2 读写文件

f.write(txt)

# 3 关闭文件

f.close()

def tieba_spider(url,begin_page,end_page):

for i in range(begin_page,end_page+1):

pn = 50 * ( i - 1)

my_url = url + str(pn)

my_html = load_page(my_url)

file_name = str(i)+'.html'

write_to_file()

if __name__ == '__main__':

url = input('请输入url地址:')

print(url)

begin_page = int(input('请输入起始页码:'))

end_page = int(input('请输入终止页码:'))

tieba_spider(url,begin_page,end_page)

出现与上述内容相同问题!!!!!!

哪位大神能解答一下下!!!