一、单节点安装Hadoop

1.安装java

因为已经安装过了,在此不多说,之前安装记录:https://ask.hellobi.com/blog/ysfyb/12008

另外有时候输入命令:jps,提示bash: jps: command not found...

一个是未配置环境变量,另外就是没有安装依赖包

$yum list *openjdk-devel*

$yum install java-1.8.0-openjdk-devel.x86_64

2.下载hadoop

$cd /spark

$wget https://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/core/hadoop-3.1.1/hadoop-3.1.1.tar.gz #版本hadoop3.1.1

$tar -xf hadoop-3.1.1.tar.gz

$vi ~/.bashrc

export HADOOP_HOME=/spark/hadoop-3.1.1

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

$source ~/.bashrc

3.修改配置文件

$cd /spark/hadoop-3.1.1

$vi ./etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk-1.8.0.181-3.b13.el7_5.x86_64

$vim etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>$vim etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.name.dir</name>

<value> file:/spark/hadoop-3.1.1/hadoop_data/hdfs/namenode</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>file:/spark/hadoop-3.1.1/hadoop_data/hdfs/datanode </value>

</property>

</configuration>

$vim etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.admin.user.env</name>

<value>HADOOP_MAPRED_HOME=$HADOOP_COMMON_HOME</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=$HADOOP_COMMON_HOME</value>

</property>

</configuration>

$vim etc/hadoop/yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

$vim sbin/start-dfs.sh

HDFS_DATANODE_USER=root

HDFS_DATANODE_SECURE_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

$vim sbin/stop-dfs.sh

HDFS_DATANODE_USER=root

HDFS_DATANODE_SECURE_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

$vim sbin/start-yarn.sh

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

4.设定 SSH无密码登入

$ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa

$cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

$chmod 710 ~/.ssh/authorized_keys

5.创建目录

sudo mkdir -p /spark/hadoop-3.1.1/hadoop_data/hdfs/namenode

sudo mkdir -p /spark/hadoop-3.1.1/hadoop_data/hdfs/datanode

sudo chown chris:chris -R /spark/hadoop-3.1.1

hadoop namenode -format #格式化hadoop

6.启动hadoop

$bin/hdfs namenode -format #格式化命名空间

$start-all.sh #启动hadoop

$jps #查看启动的进程

$stop-all.sh #终止hadoop

namenode信息:http://localhost:9870/

打开hadoop界面:http://localhost:8088/

6.测试hadoop

$hadoop dfsadmin -report #查看Hadoop的状态

$cd ~

$echo "hello world" > file1.txt

$echo "hello hadoop" > file2.txt

$echo "hello mapreduce" >> file2.txt

$start-all.sh

$hadoop fs -mkdir /data

$hadoop fs -put /home/chris/file* /data

$hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.1.jar wordcount /data/file /out_data

$hadoop fs -cat /out_data/part-r-00000

参考资料:http://blog.sina.com.cn/s/blog_1618db3120102w7w4.html,https://www.jianshu.com/p/68087004baa0,https://blog.csdn.net/hawkDai5/article/details/79271824

二、CDH集群

#要配置机器分布

系统名 机器名 IP

Centos6 master 192.168.15.212

Centos6 slave2 192.168.15.211

1.环境配置

(1)修改机器名称

$sudo vim /etc/hostname #Centos6命令 vim /etc/sysconfig/network

master

$hostname #查看机器名称是否成功 需要reboot

$ping master #查看主机是否连接成功

(2)配置host文件

$sudo vi /etc/hosts #所有机器host文件添加如下

192.168.15.212 master

#192.168.15.110 slave1

192.168.15.211 slave2

$ping slave2 #此时在Master应该能够ping通slave2机器

(3)禁用ipv6

$ifconfig #此时可以看到inet6的,不支持IPV6

#CentOS 7

$sudo vim /etc/sysctl.conf

net.ipv6.conf.all.disable_ipv6=1

$sudo vim /etc/sysconfig/network

NETWORKING_IPV6=no

$sudo vim /etc/sysconfig/network-scripts/ifcfg-ens33

IPV6INIT=no => IPV6INIT=no

$systemctl disable ip6tables.service #关闭防火墙的开机自启动 如果报错Failed to execute operation: No such file or directory可跳过

#CentOS 6

$more /etc/modprobe.d/dist.conf

$echo " ">>/etc/modprobe.d/dist.conf

$echo "alias net-pf-10 off">>/etc/modprobe.d/dist.conf

$echo "alias ipv6 off">>/etc/modprobe.d/dist.conf

$ifconfig #检验下,OK的话此时看不到inet6

2.SSH配置

master操作即可

$ssh-keygen #生成密钥

$ssh-copy-id chris@slave2 #分发公钥到每台机器,包括自己

$ssh slave2 #可以连到slave2的机器 每台机器的用户名必须相同(usermod -l 新用户名 -d /home/新用户名 -m 老用户名)

$exit

3.关闭防火墙和禁用selinux

(1)关闭防火墙

#CentOS 6

$sudo service iptables stop #关闭防火墙

$sudo chkconfig iptables off #开机不自启动

$chkconfig --list | grep iptables #显示:iptables 0:off 1:off 2:off 3:off 4:off 5:off 6:off

#CentOS 7

$systemctl status firewalld.service #显示防火墙状态 active (running)

$systemctl stop firewalld.service #关闭防火墙

$systemctl disable firewalld.service #停止防火墙服务,开机不自启 应该显示Active: inactive (dead)

(2)禁用selinux

$sudo vim /etc/selinux/config

SELINUX=enforcing => SELINUX=disabled

4.安装jdk

最好使用rpm或yum安装,jdk会安装到默认位置

上传jdk包jdk-7u71-linux-x64.rpm到 /opt

$sudo scp jdk-7u71-linux-x64.rpm chris@slave2:~ #把文件分发到其他机器

$ssh slave2

$sudo mv ~/jdk-7u71-linux-x64.rpm /opt

$exit

删除自带的java

$rpm -qa | grep java #查看机器自带的java

$sudo rpm -e --nodeps tzdata-java-2018g-1.el7.noarch #删除java,把全部机器java删除干净

安装上传的jdk

$sudo rpm -ivh jdk-7u71-linux-x64.rpm$vim /etc/profile #配置环境变量

#JAVA_HOME

JAVA_HOME=/usr/java/jdk1.7.0_71

export PATH=$PATH:$JAVA_HOME/bin

$source /etc/profile

$java -version

5.安装myspl

仅在机器mater上安装

上传mysql文件到/opt

$sudo mv ~/MySQL-5.6.35-1.linux_glibc2.5.x86_64.rpm-bundle.tar /opt

$rpm -qa | grep mysql #查看是否有自带的mysql,有的话删掉

$sudo tar -xvf MySQL-5.6.35-1.linux_glibc2.5.x86_64.rpm-bundle.tar

$sudo rpm -ivh MySQL-server-5.6.35-1.linux_glibc2.5.x86_64.rpm && sudo rpm -ivh MySQL-client-5.6.35-1.linux_glibc2.5.x86_64.rpm

#检查端口是否启用

$sudo service mysql start #mysql启用3306端口

$netstat -nat #检查端口

mysql初始进入密码默认在/root/.mysql_secret结尾处

登录mysql

$mysql -u root -p #进入mysql

mysql> set password for root@localhost = password('123456'); #修改新密码为123456

mysql> grant all on *.* to root@'master' identified by '123456' ; #给三台机器授权

mysql> flush privileges; #授权生效

mysql> exit

#安装完余下的mysql文件

$sudo rpm -ivh MySQL-devel-5.6.35-1.linux_glibc2.5.x86_64.rpm && sudo rpm -ivh MySQL-embedded-5.6.35-1.linux_glibc2.5.x86_64.rpm && sudo rpm -ivh MySQL-shared-5.6.35-1.linux_glibc2.5.x86_64.rpm && sudo rpm -ivh MySQL-shared-compat-5.6.35-1.linux_glibc2.5.x86_64.rpm && sudo rpm -ivh MySQL-test-5.6.35-1.linux_glibc2.5.x86_64.rpm

卸载mysql步骤参考:https://jingyan.baidu.com/article/4b52d702db8a82fc5c774b92.html

6.安装cloudera manager

#安装相关包

$sudo yum -y install chkconfig python bind-utils psmisc libxslt zlib sqlite cyrus-sasl-plain cyrus-sasl-gssapi fuse portmap fuse-libs redhat-lsb

安装cloudera manager server

把cloudera-manager-el6-cm5.8.0_x86_64.tar.gz文件上传到/opt目录下

$sudo mv ~/cloudera-manager-el6-cm5.8.0_x86_64.tar.gz /opt

$sudo tar -xvf cloudera-manager-el6-cm5.8.0_x86_64.tar.gz

修改agent配置

$sudo vim /opt/cm-5.8.0/etc/cloudera-scm-agent/config.ini

server_host=localhost => server_host=master

CM上部署mysql connector包

$sudo mv ~/mysql-connector-java-5.1.27-bin.jar /opt/cm-5.8.0/share/cmf/lib

cm复制到其他机器

$sudo scp -r cm-5.8.0 slave2:/opt/

配置CM数据库的权限,添加一个temp用户

$mysql -u root -p

mysql> grant all privileges on *.* to 'temp'@'%' identified by 'temp' with grant option ;

mysql> flush privileges ;

初始化CM

$cd /opt/cm-5.8.0/share/cmf/schema

$sudo ./scm_prepare_database.sh mysql -h master -utemp -ptemp --scm-host master scm scm scm

7.CDH配置

把下载的CDH上传到master机器

$sudo mv ~/CDH-5.8.0-1.cdh5.8.0.p0.42-el6.parcel /opt/cloudera/parcel-repo && sudo mv ~/CDH-5.8.0-1.cdh5.8.0.p0.42-el6.parcel.sha /opt/cloudera/parcel-repo

8.启用CM-server和CM-agent

$sudo /opt/cm-5.8.0/etc/init.d/cloudera-scm-server start

#如果报错,添加用户useradd --system --home=/opt/cm-5.8.0/run/cloudera-scm-server --shell=/bin/false --comment "Cloudera SCM User" cloudera-scm

web登录

#windows中hosts添加主机名和ip

打开c:\windows\system32\drivers\etc中hosts文件,添加以下内容:

192.168.15.212 chris-master

#192.168.15.110 chris-slave1

192.168.15.211 chris-slave2浏览器中打开http://chris-master:7180

账号和密码默认都是admin,全部都按默认配置继续(如果一直读不了界面,从java开始重新安装)

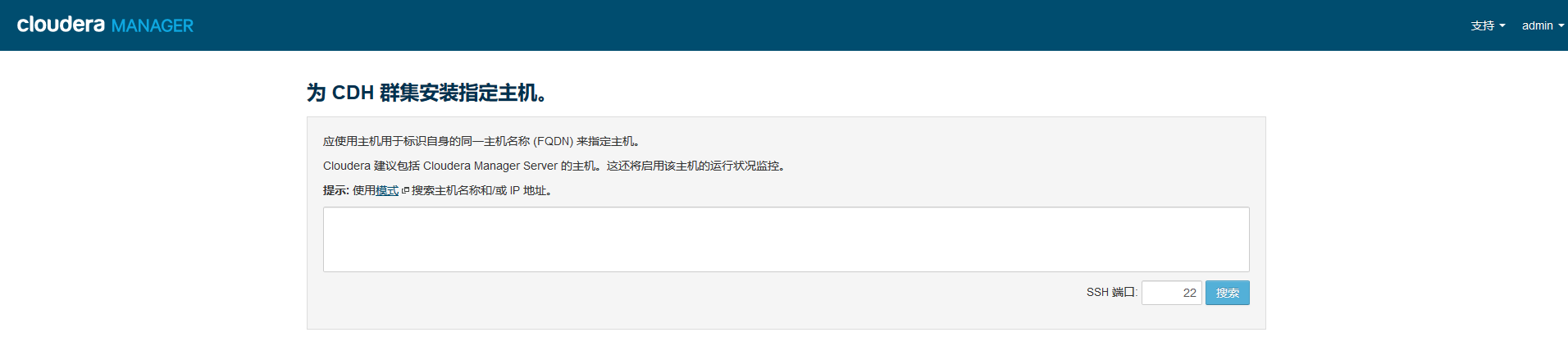

到这一步未能看到主机,因为未启动agent

$sudo /opt/cm-5.8.0/etc/init.d/cloudera-scm-agent start #每台机器启动

启动失败,需要自己创建目录

$sudo mkdir /opt/cm-5.8.0/run/cloudera-scm-agent #跳回上一步重新启动

如果还是没有成功,可去查看日志找具体原因

$cd /opt/cm-5.8.0/log/cloudera-scm-agent

$cat cloudera-scm-agent.out

#报错/usr/bin/env: python2.6: No such file or directory,需要安装python2.6,系统版本对不上,更换CM版本

刷新界面

看到两个警告,

$echo 10 >/proc/sys/vm/swappiness #cat /proc/sys/vm/swappiness 结果为10,两台机器都执行,需要root用户权限

$echo never > /sys/kernel/mm/transparent_hugepage/defrag选择自定义服务HDFS

然后一直选择默认选项,配置中如果存在问题看输出日志详细信息