准备工作

一套搭建好的hadoop环境

下载scala、spark安装包

1、安装scala

tar -xzvf scala-2.12.3.tgz

2、安装spark

tar -xzvf spark-2.2.0-bin-hadoop2.7.tgz

3、修改 、/etc/profile文件

JAVA_HOME=/usr/java/jdk1.8.0_144/

HADOOP_HOME=/opt/hadoop-2.7.4

SCALA_HOME=/opt/scala-2.12.3

SPARK_HOME=/opt/spark-2.2.0-bin-hadoop2.7

PATH=$PATH:$JAVA_HOME/bin:/usr/bin:/usr/sbin:/bin:/sbin:/usr/X11R6/bin:$HADOOP_HOME/bin:$PATH:$SPARK_HOME/bin:$SCALA_HOME/bin

CLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar

export JAVA_HOME PATH CLASSPATH

4、启动spark

/opt/spark-2.2.0-bin-hadoop2.7/sbin/start-all.sh

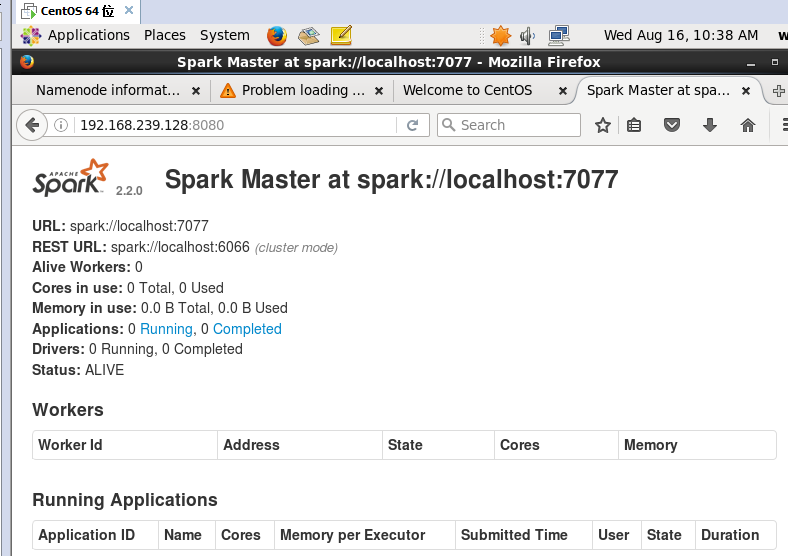

5、验证

http://主机IP:8080

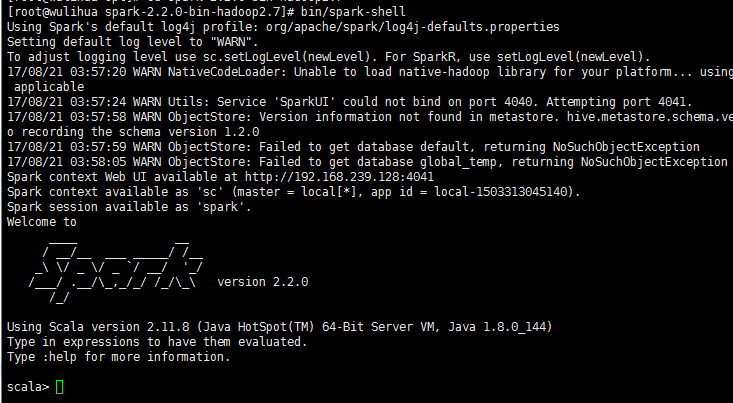

打开spark所在目录 运行spark-shell