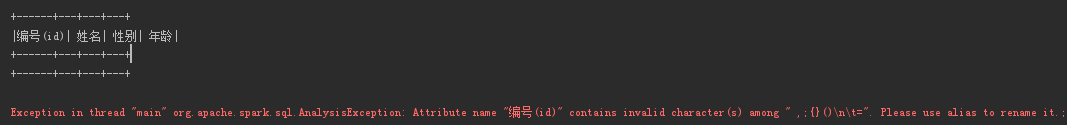

spark天然支持parquet,且其推荐的存储格式就是parquet,但存储时,对其列名有一定的要求:

1.列名称不能包含" ,;{}()\n\t="

SparkSession sparkSession = SparkSession.builder().appName("Test").master("local")

.config("spark.sql.inMemoryColumnarStorage.compressed", "true").getOrCreate();

//给定一串表头

String colstr = "编号(id),姓名,性别,年龄";

//以,分割

String[] cols = colstr.split(",");

List<StructField> fields = new ArrayList<>();

for (String fieldName : cols) {

//创建StructField,因不知其类型,默认转为字符型

StructField field = DataTypes.createStructField(fieldName, DataTypes.StringType, true);

fields.add(field);

}

//创建StructType

StructType schema = DataTypes.createStructType(fields);

List<Row> rows = new ArrayList<>();

//创建只包含schema的Dataset

Dataset<Row> data = sparkSession.createDataFrame(rows, schema);

data.show();

//存储为parquet格式

data.write().format("parquet").save("/data.parquet");