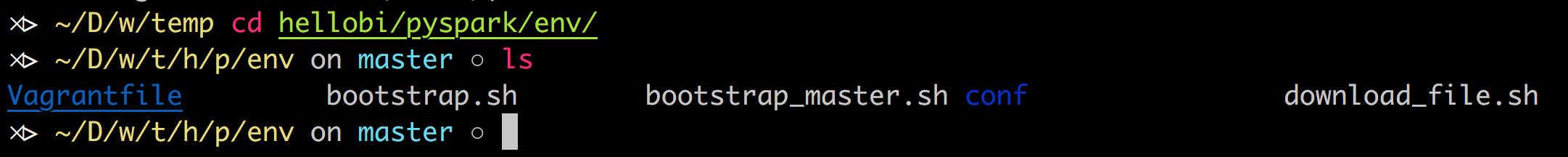

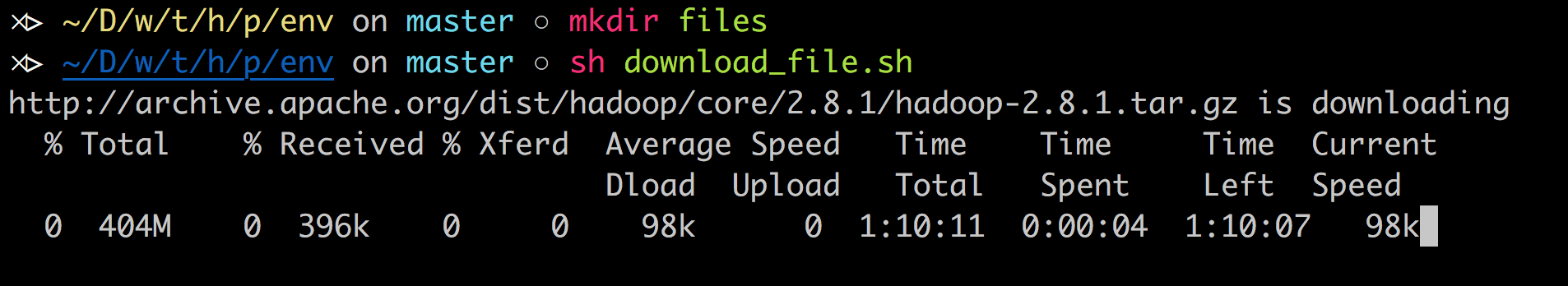

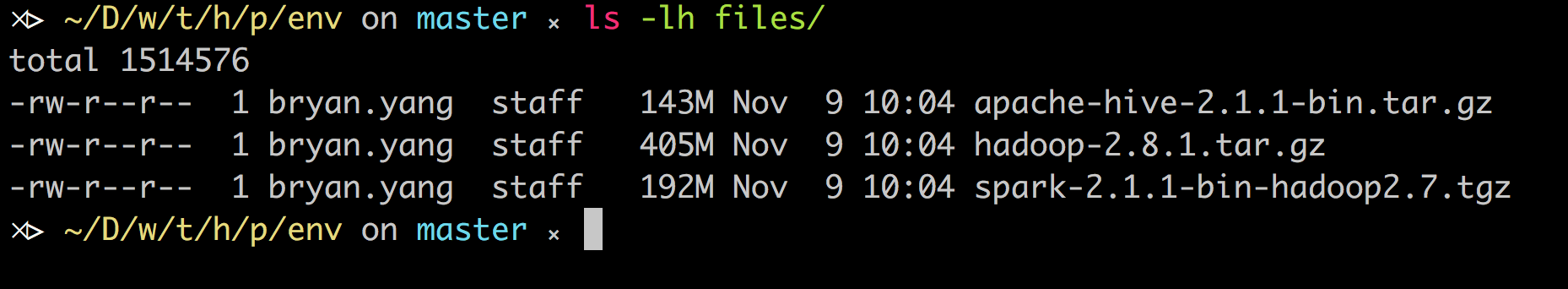

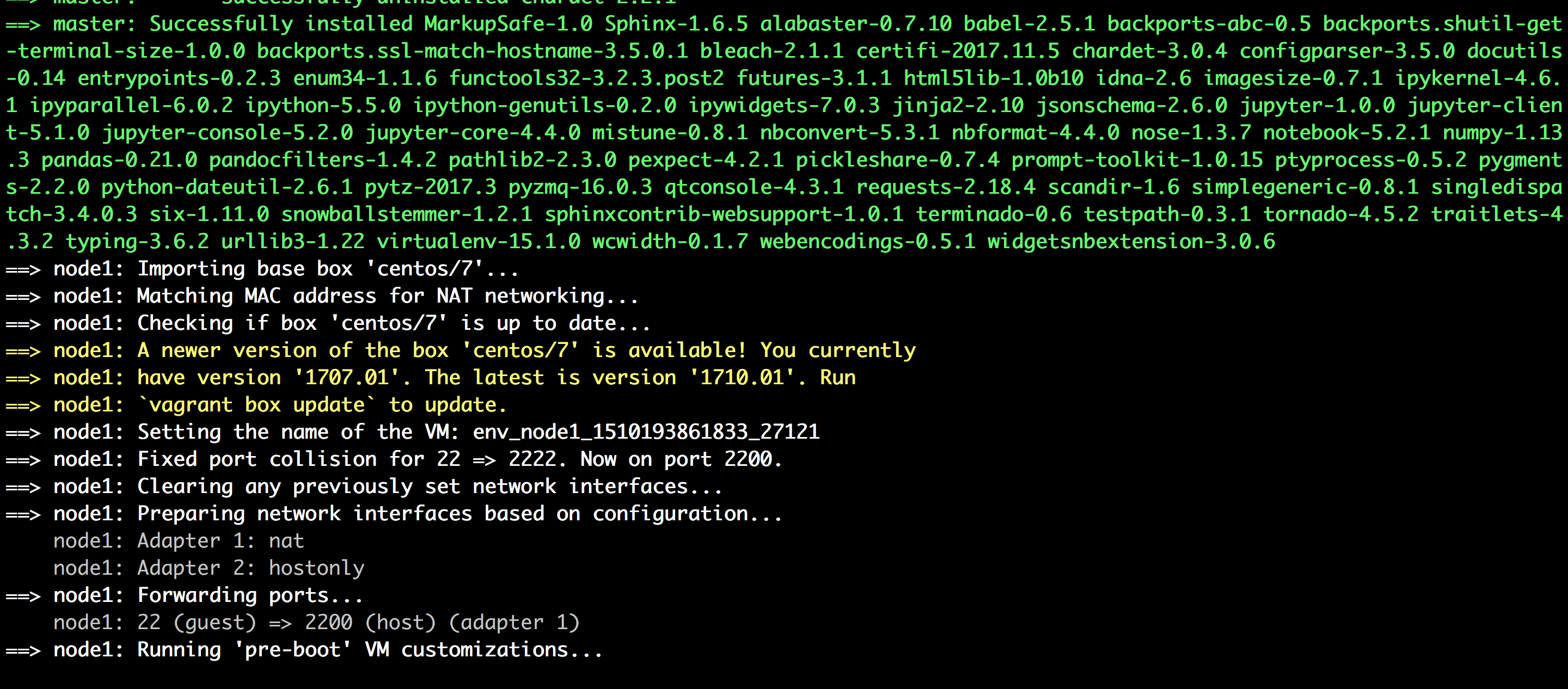

- download files

mkdir files

sh download_file.sh

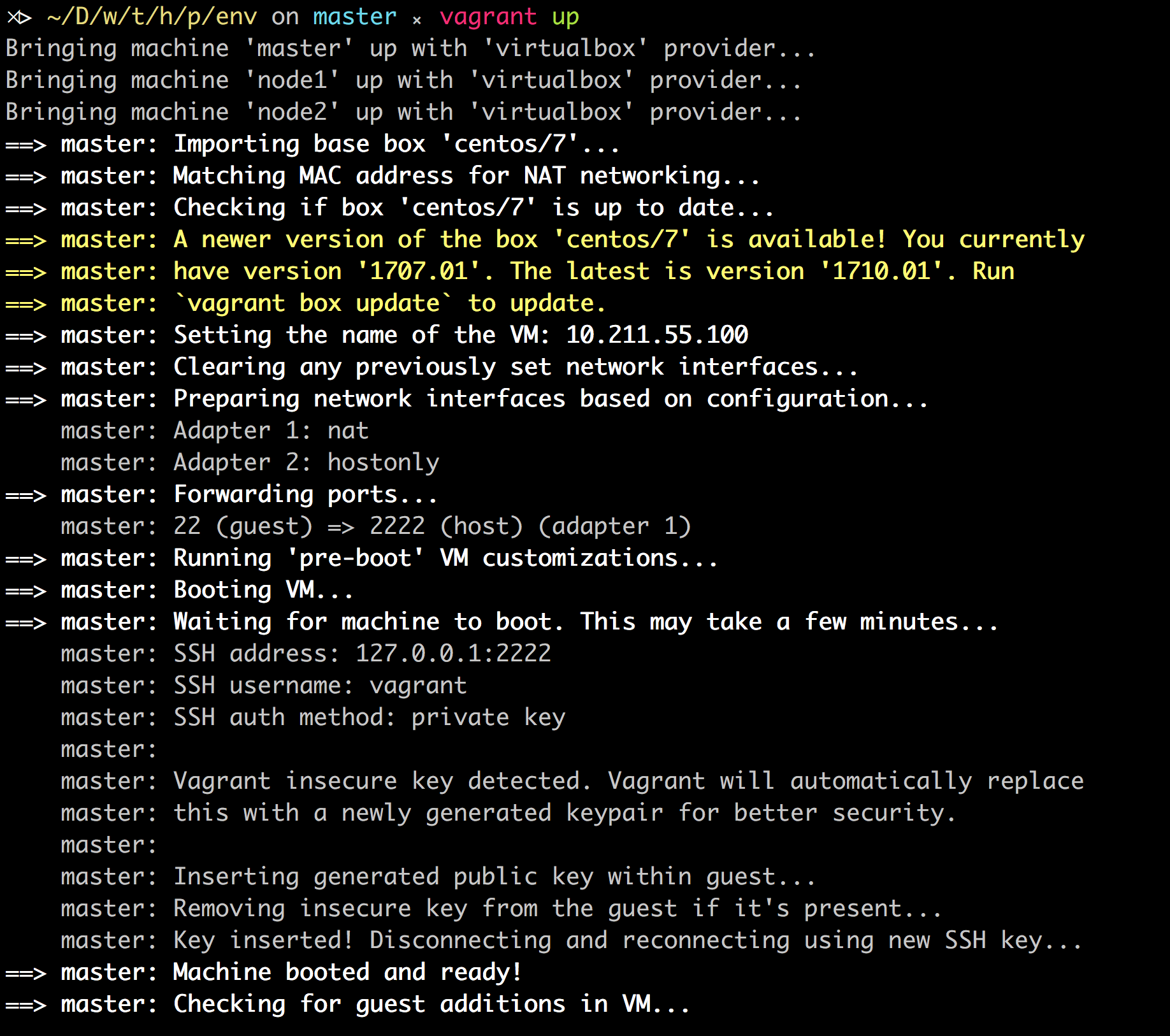

- 一切正常运行,有红字跳出来也不用怕

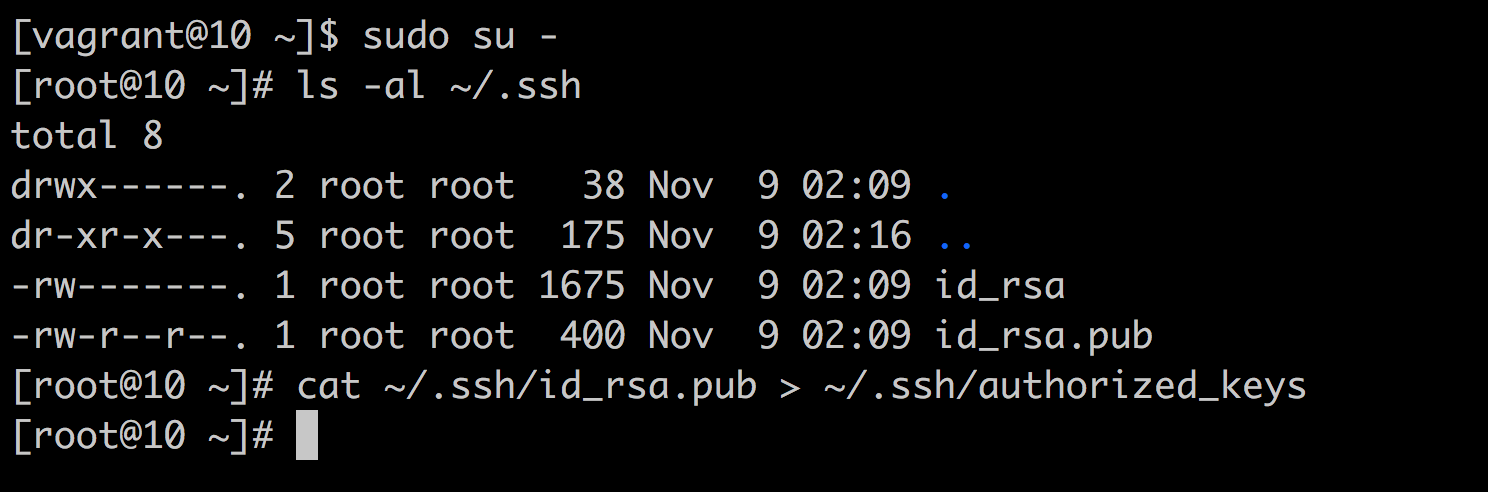

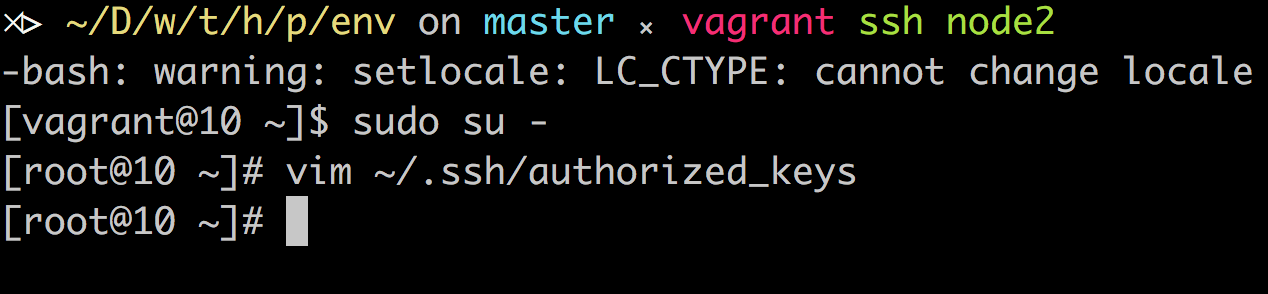

- copy ssh key

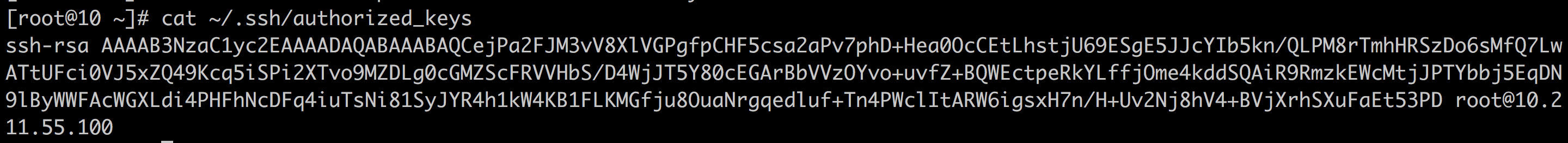

进入 master 的 root 帐号,把 id_rsa.pub 复制到 authorized_keys 里

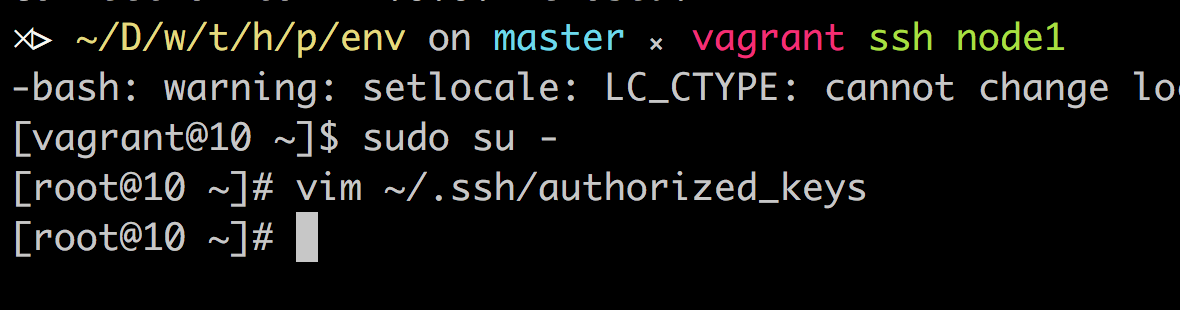

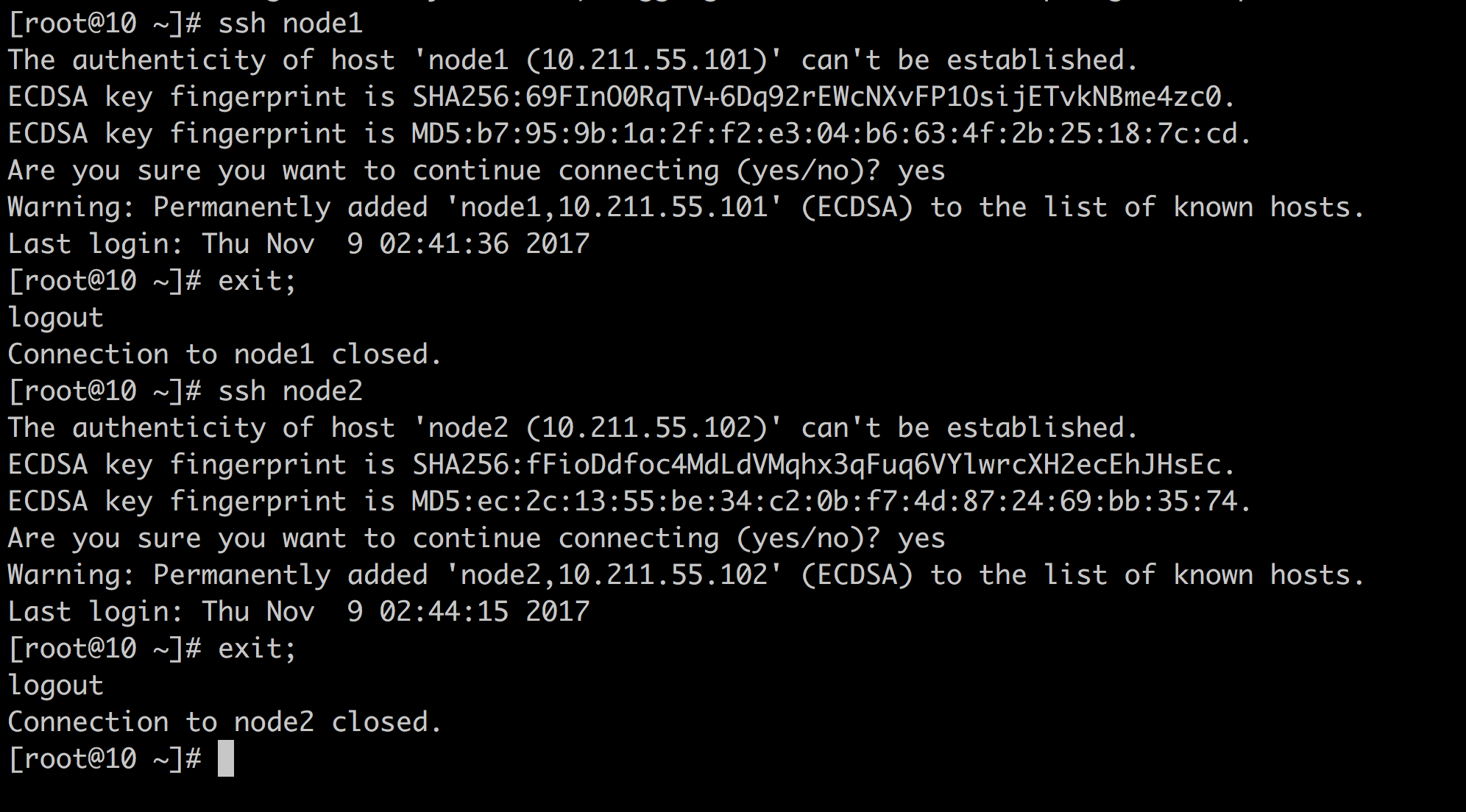

接着把 public key 丢给 node

回到 master 后确认连线状况

HADOOP

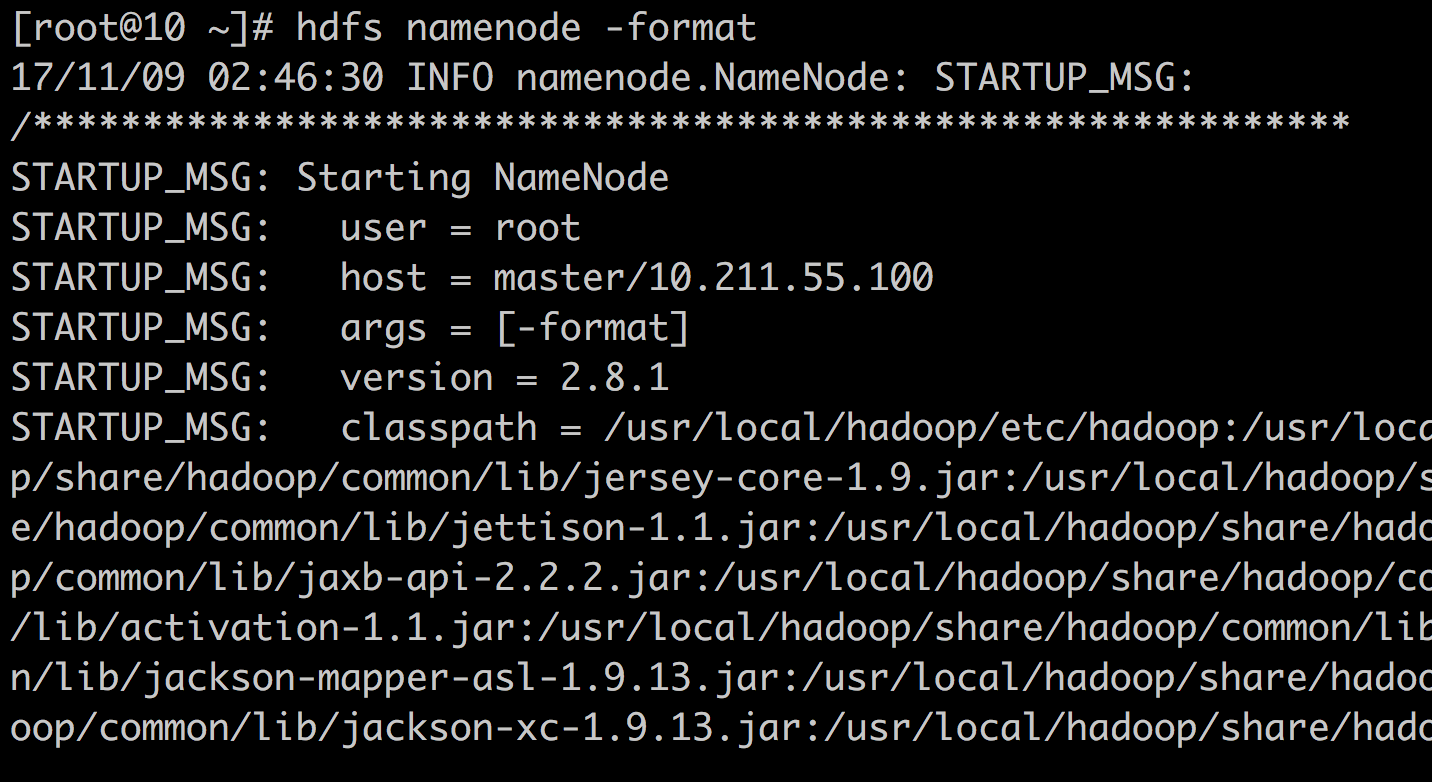

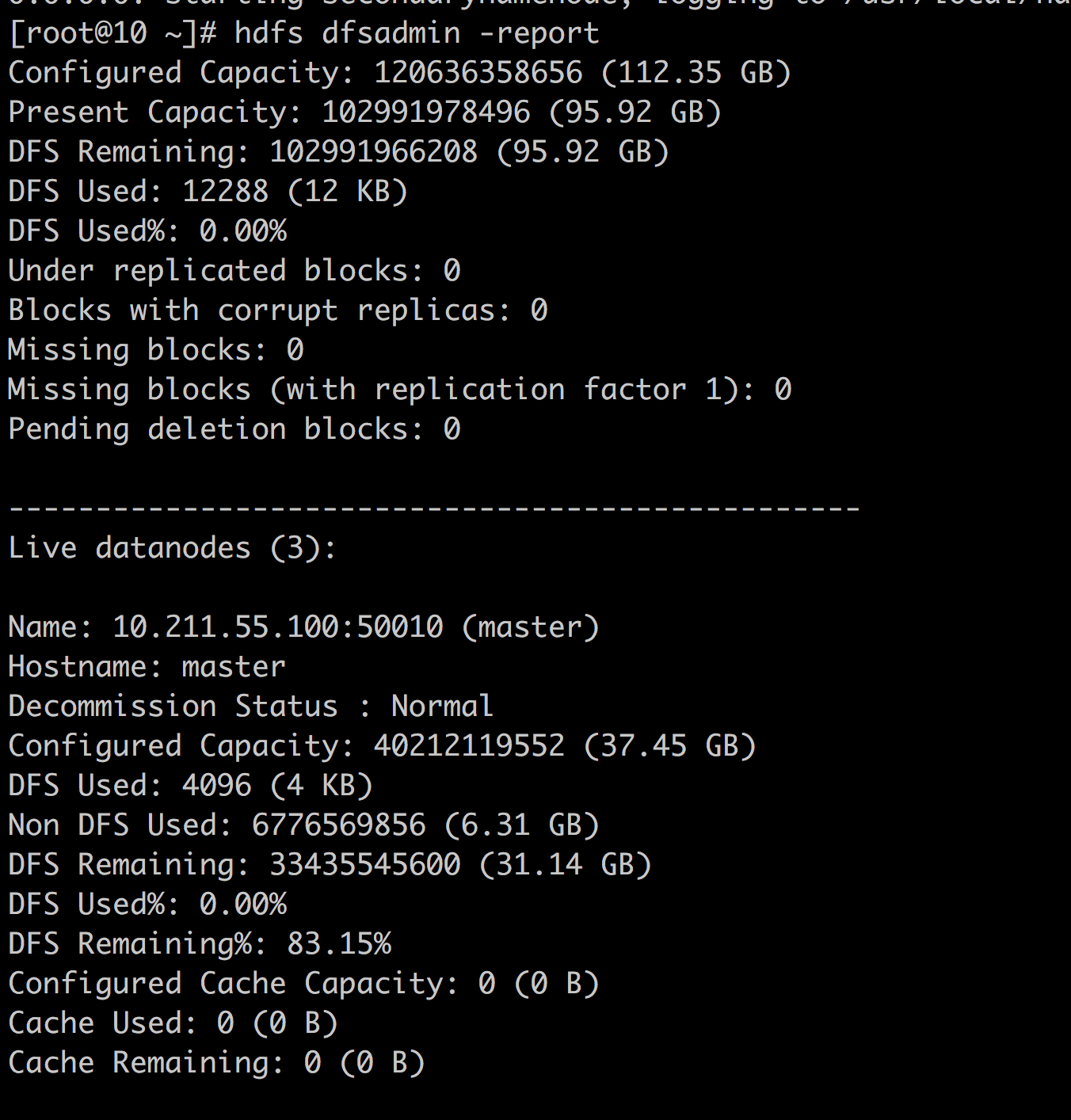

- Format namenode

hdfs namenode -format

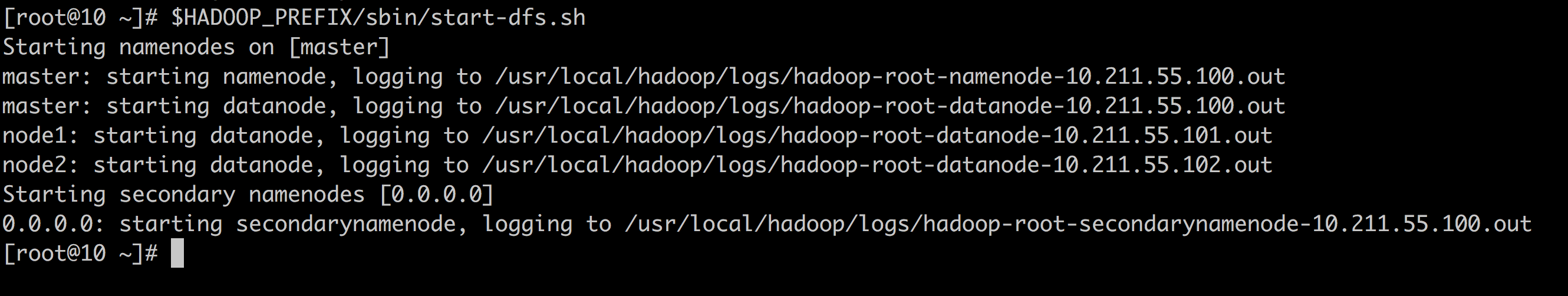

- 启动 Hdfs

$HADOOP_PREFIX/sbin/start-dfs.sh

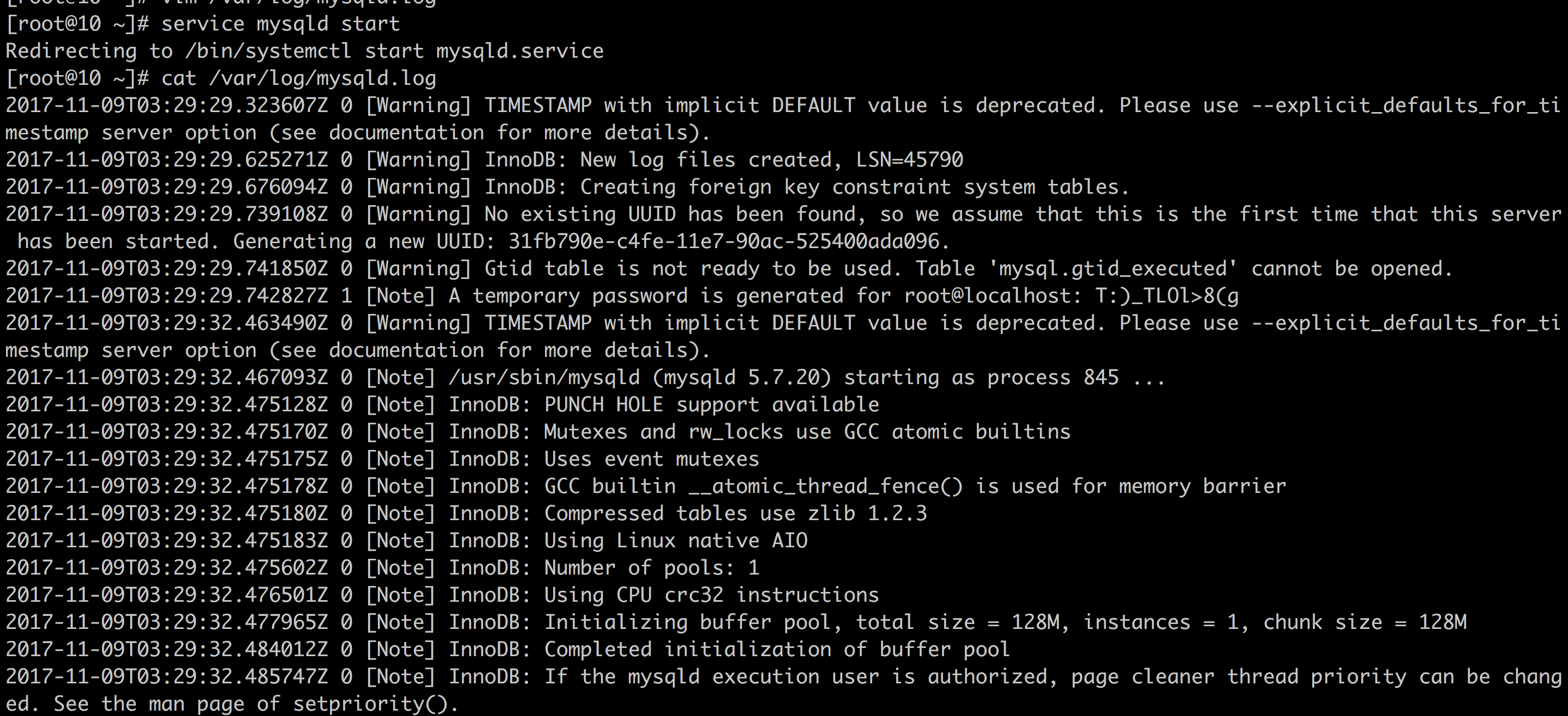

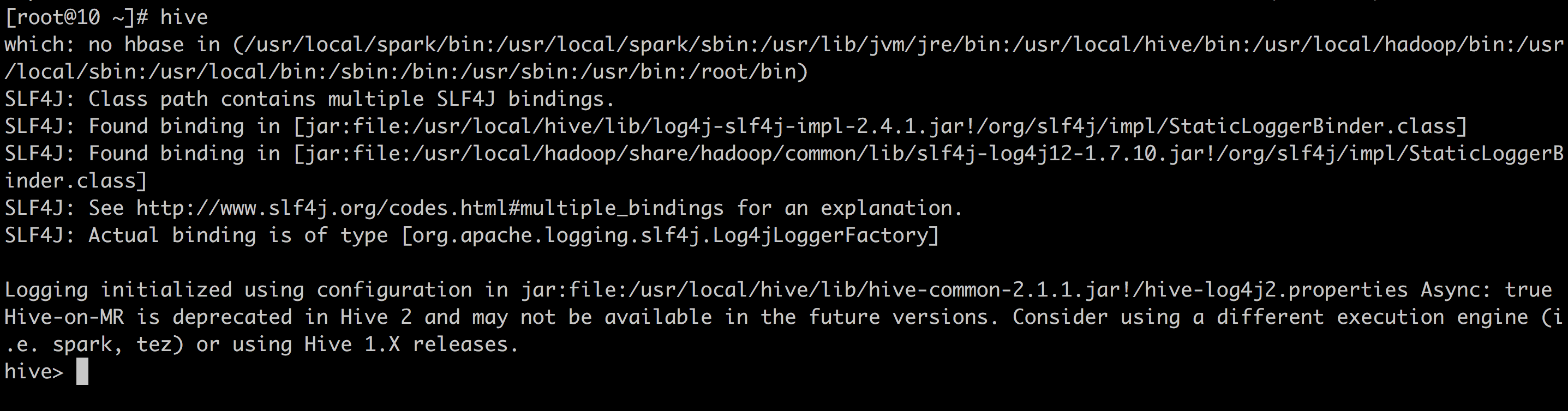

HIVE

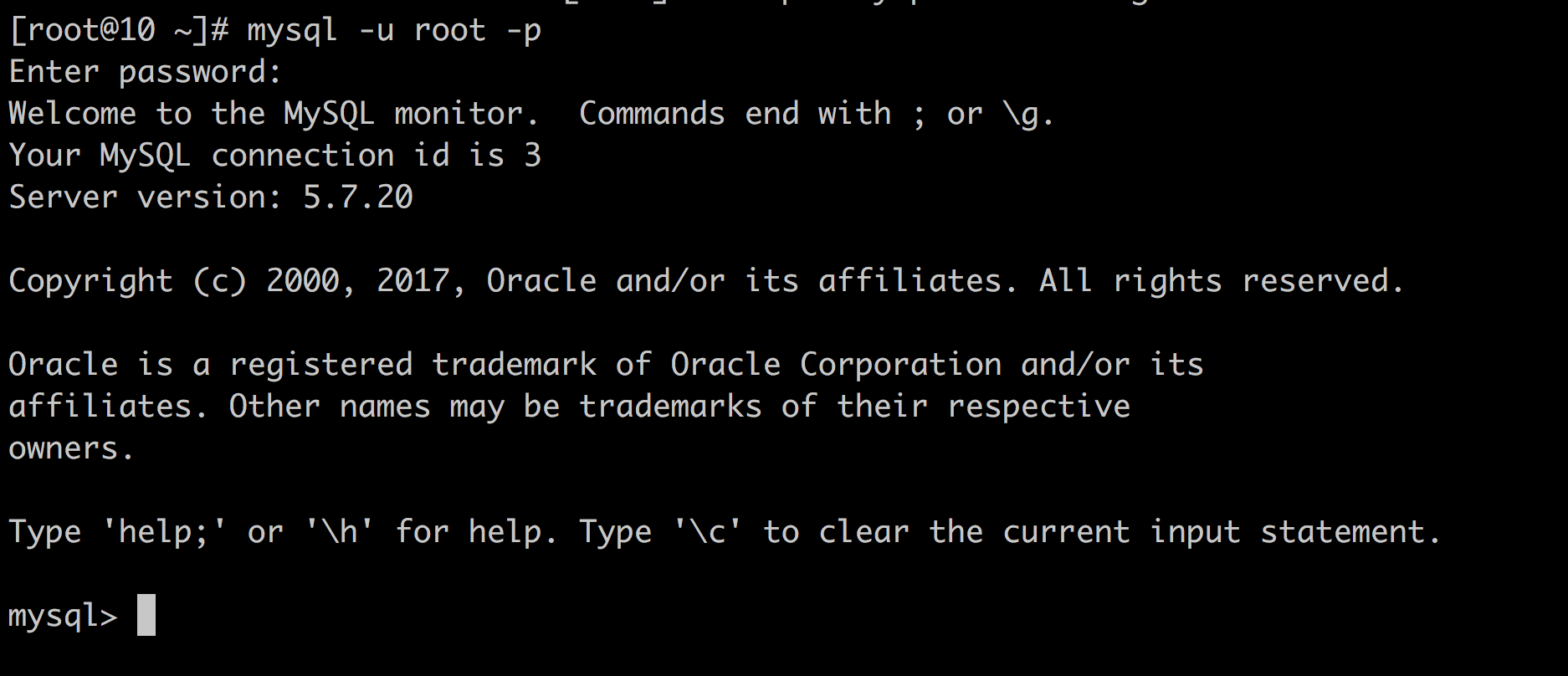

- 启动 mysql

- service mysqld start

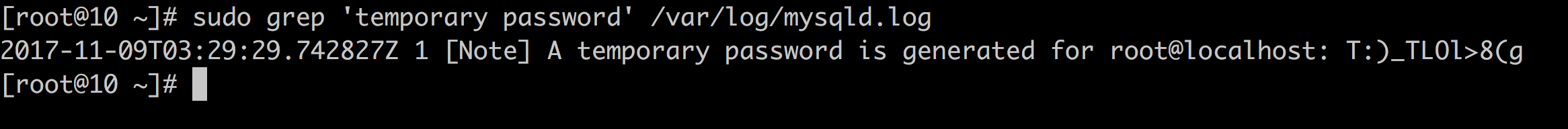

- 找到暂时密码

sudo grep ‘temporary password’ /var/log/mysqld.log

ALTER USER ‘root’@’localhost’ IDENTIFIED BY ‘!QAZ2wsx’;

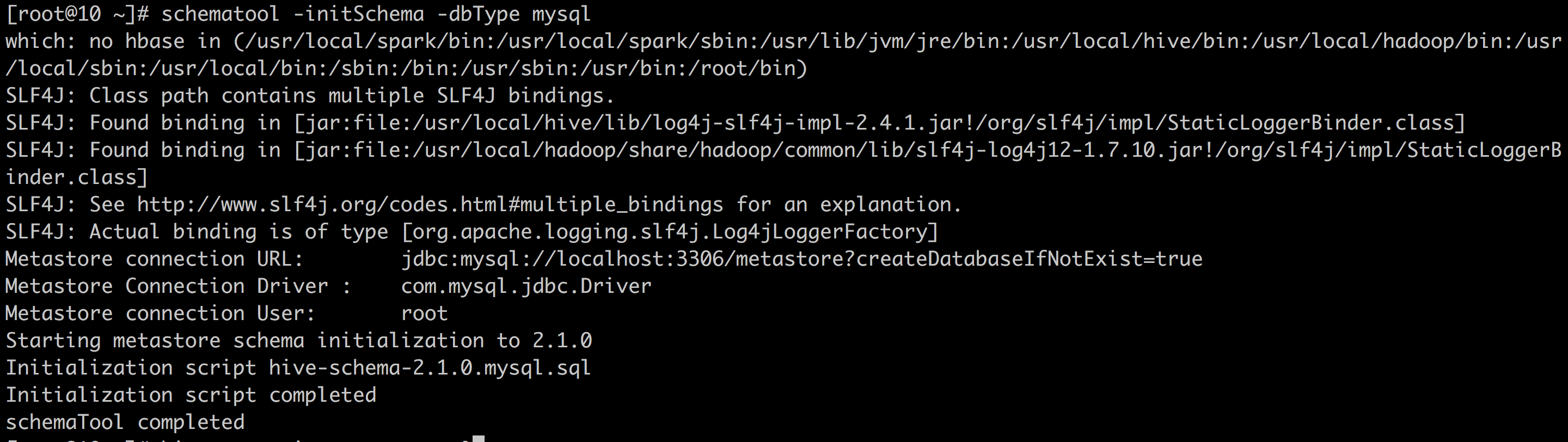

schematool -initSchema -dbType mysql

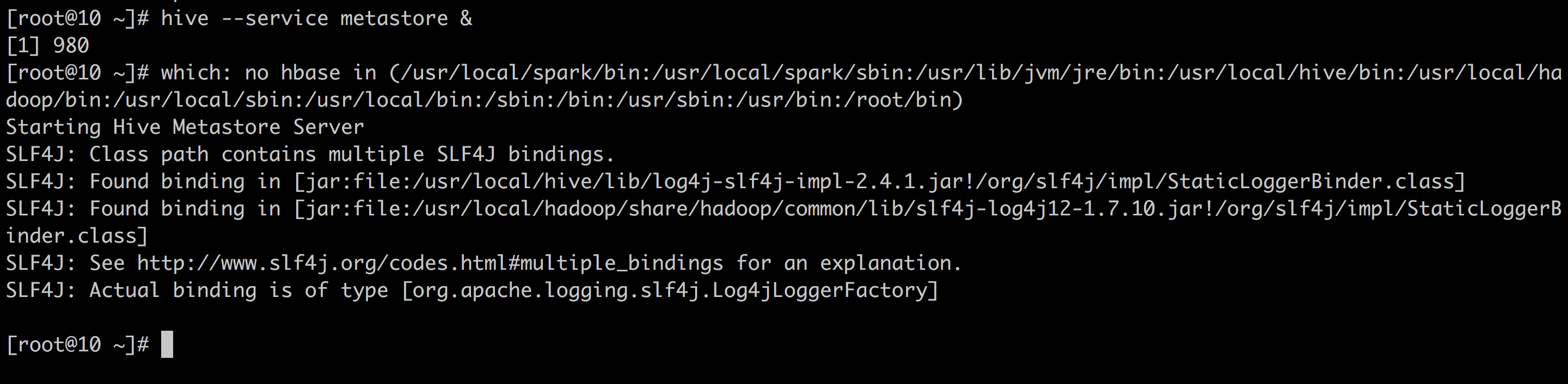

hive — service metastore &

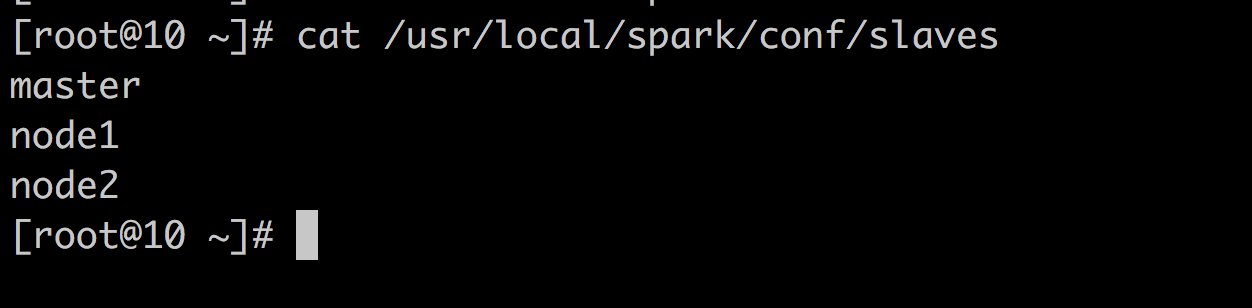

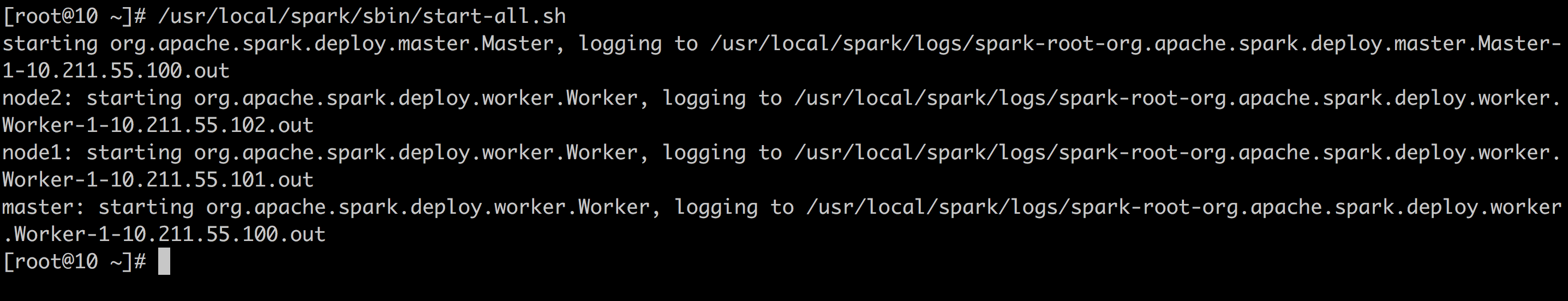

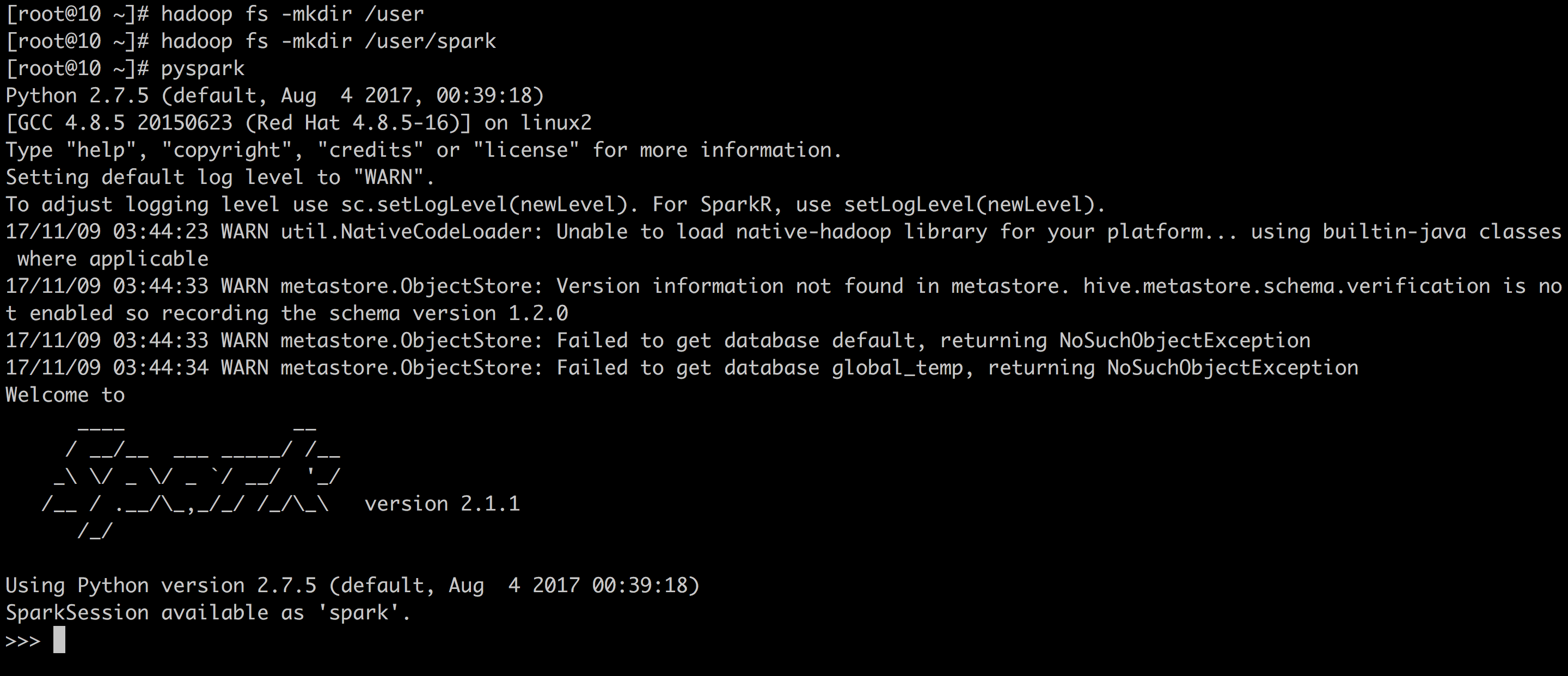

SPARK

hadoop fs -mkdir /user

hadoop fs -mkdir /user/spark

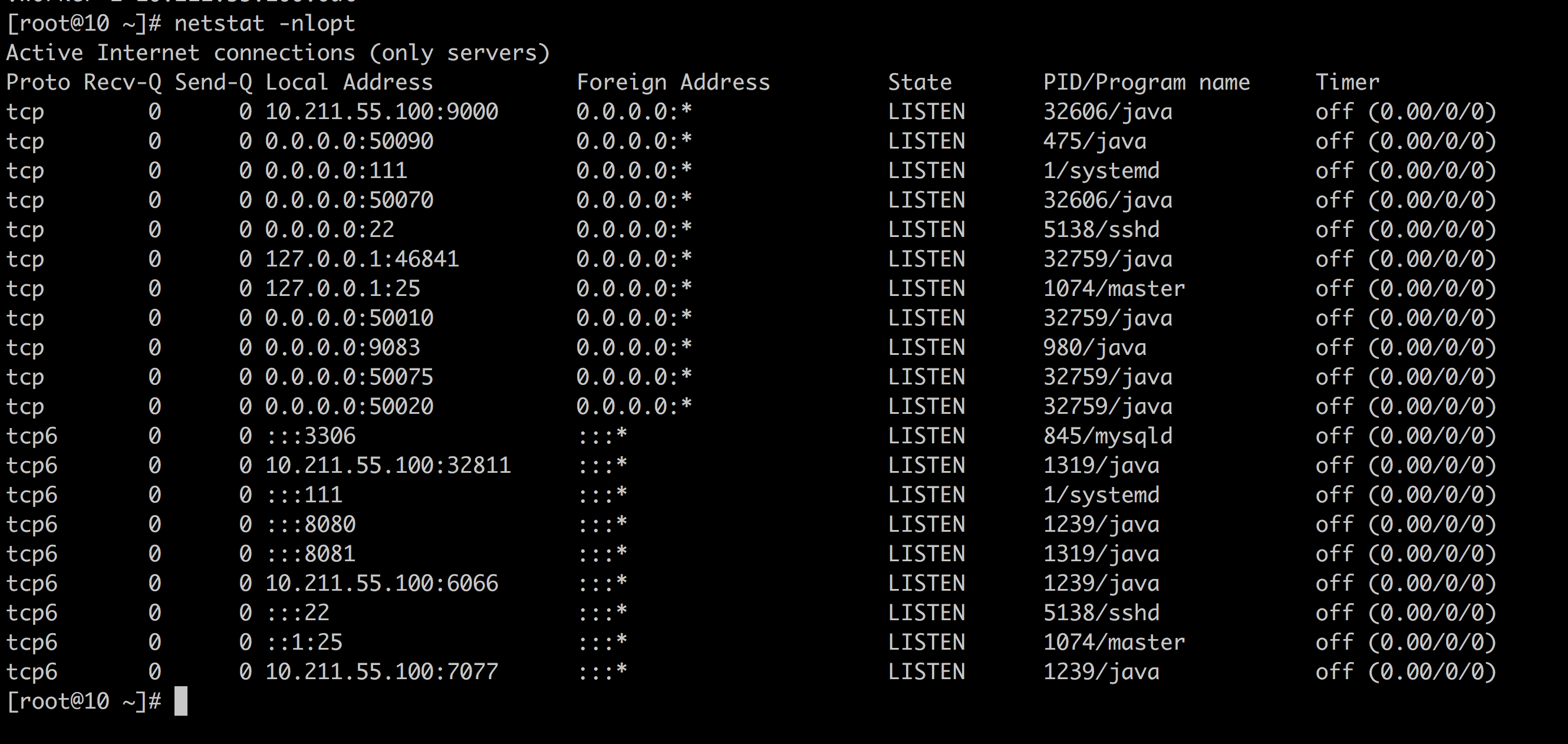

确认服务

9000: Hadoop Master

50070: Hadoop UI

9083: Hive metastore

8080: Spark UI

7077: Spark Master

安裝環境本來就不容易,一鍵安裝是多少功夫的累積.這些功夫都得靠自己練,自己去看 Log 檔,自己去看錯誤訊息,多嘗試才有辦法練成.